Breast Imaging Medical Agent (BIMA)

Rayleen Ramos

Co-Presenters: Individual Presentation

College: Hennings College of Science Mathematics and Technology

Major: MS.COMPUTER/SCIENCE

Faculty Research Mentor: Meng Xu, Kuan Huang

Abstract:

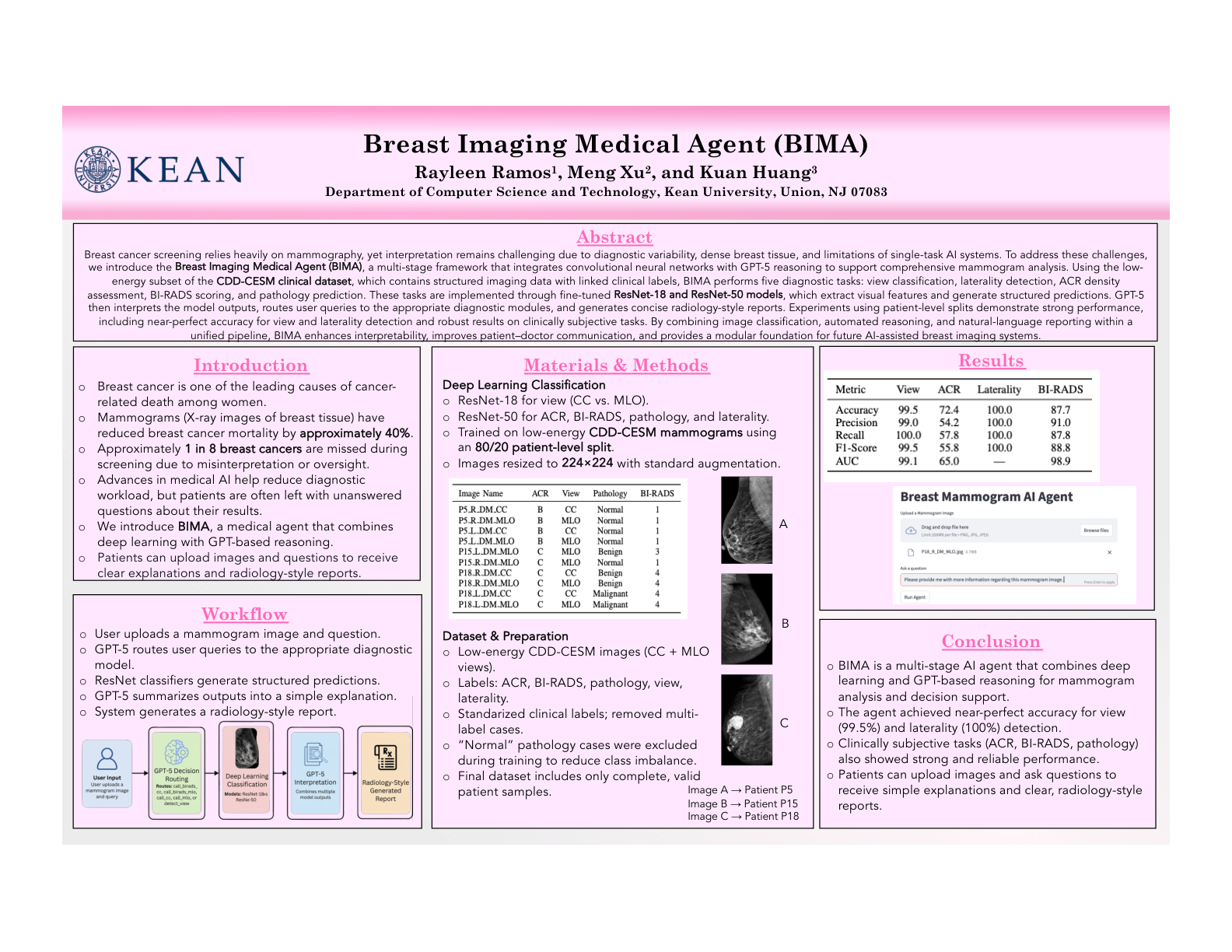

Mammography is a critical screening tool for early detection of breast cancer, yet interpretation errors remain common due to complex image patterns, dense breast tissue, and inconsistency in radiologist examinations. These challenges can lead to missed diagnoses, delayed communication, and patient confusion. Recent advances in artificial intelligence have improved medical image classification. However, many systems remain limited to single-task predictions and lack breast-specific design, integrated reasoning, and clear reporting for users. In this work, we propose the Breast Imaging Medical Agent (BIMA), a domain-specific, multi-stage AI framework designed to assist in the interpretation and communication of mammogram findings. BIMA combines deep learning–based image analysis with large language model reasoning to support a sequential diagnostic workflow. Low-energy mammogram images are first processed using convolutional neural networks from the ResNet family. ResNet-18 is employed for view classification (craniocaudal vs mediolateral oblique) to ensure correct orientation handling. At the same time, ResNet-50 is used for breast density (ACR) classification due to its ability to capture complex tissue patterns. The classification outputs are then passed to a GPT-5–based reasoning module, which generates structured, clinically meaningful explanations of the results. Finally, BIMA produces a radiology-style report designed for end users, translating model predictions into clear, accessible summaries that improve interpretability for both clinicians and patients. Experiments were conducted across multiple diagnostic tasks, including view classification, breast density assessment, BI-RADS categorization, and pathology labeling. The proposed system demonstrates how integrating vision, reasoning, and reporting within a unified agent can enhance diagnostic workflows, reduce interpretation burden, and improve doctor-patient communication.