An Agentic, App-Based Multimodal Emotion Recognition System

Jose Marchena

Co-Presenters: Karanpreet Singh, Karanpreet Singh

College: Hennings College of Science Mathematics and Technology

Major: MS.COMPUTER/SCIENCE

Faculty Research Mentor: Yulia Kumar, Juan J

Abstract:

Keywords: Multimodal Emotion Recognition (MER), Valence, Arousal, Agentic AI, Multi-Agent Systems, Multimodal Fusion

Abstract:

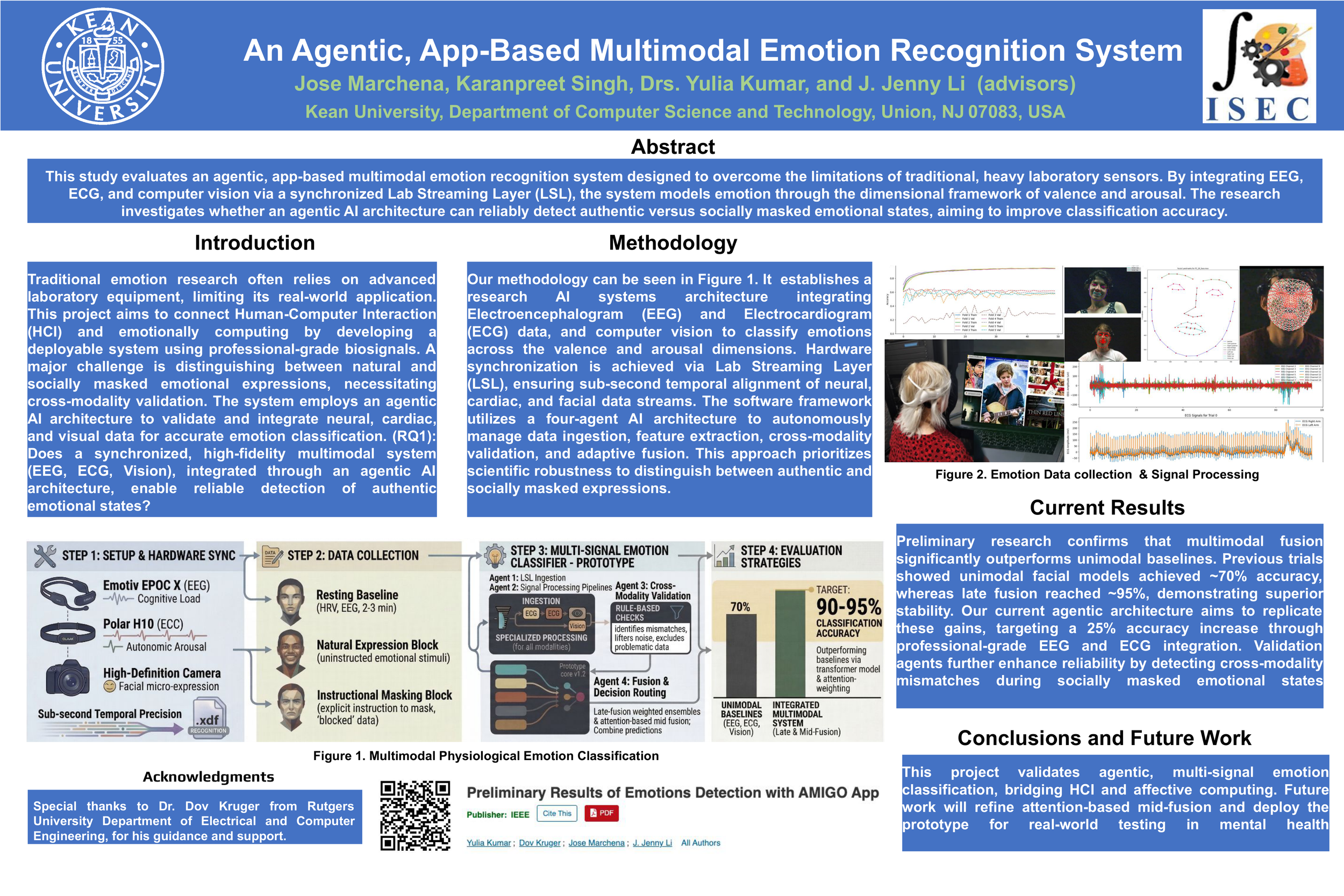

This study designs and evaluates an agentic, app-based multimodal emotion recognition system under real-world constraints. Traditional emotion research often relies on heavy laboratory sensors such as EEG and ECG, limiting scalability and deployment feasibility. Rather than replicating lab-intensive paradigms, this project investigates whether lightweight modalities—vision, audio, and photoplethysmography (PPG)—can provide meaningful performance gains when combined. Emotion is modeled along the dimensional framework of valence and arousal. The central research question asks whether multimodal fusion significantly outperforms unimodal baselines and whether the added system complexity is justified within an adaptive architecture.

A purpose-built, controlled multimodal dataset (N ≈ 25–40 participants) will be collected under standardized, app-based recording conditions to enable clean unimodal and multimodal comparisons. Participants will engage with non-VR elicitation stimuli, including short prompts, structured tasks, and audio-visual materials. Valence and arousal ground truth labels will be obtained via participant self-report immediately following each stimulus block using standardized rating scales. Three synchronized data streams will be recorded: camera-based facial and motion features (valence-dominant), microphone-based prosodic and affective speech features, and physiological signals from a lightweight PPG wearable capturing arousal-related trends. Signals will be segmented into fixed temporal windows (e.g., 5–10 seconds) for feature extraction and temporal modeling. The system implements a four-agent architecture consisting of a Data Ingestion Agent for timestamp alignment and completeness validation, modality-specific processing agents for feature extraction, a Quality and Validation Agent to detect corrupted or noisy segments, and a Fusion and Routing Agent that performs adaptive routing without hard-coded pipelines.

Unimodal baselines (vision-only, audio-only, PPG-only) will be compared against multimodal temporal models (e.g., LSTM/BiLSTM with late or hybrid fusion). Model performance will be assessed using cross-validation, and statistical comparisons between unimodal and multimodal approaches will be conducted using paired testing or bootstrap estimation to evaluate robustness and performance gains relative to system complexity.

This work proposes a scalable multimodal framework that integrates agentic AI principles with deployable sensing constraints, advancing emotion-aware systems beyond controlled laboratory environments