Fine-tuning LLM for text-to-video Generation to Assist Clinicians

Catherine Ramirez

Co-Presenters: Individual Presentation

College: Hennings College of Science Mathematics and Technology

Major: BS.COMPUTER/SCI

Faculty Research Mentor: Navya Martin Kollapally

Abstract:

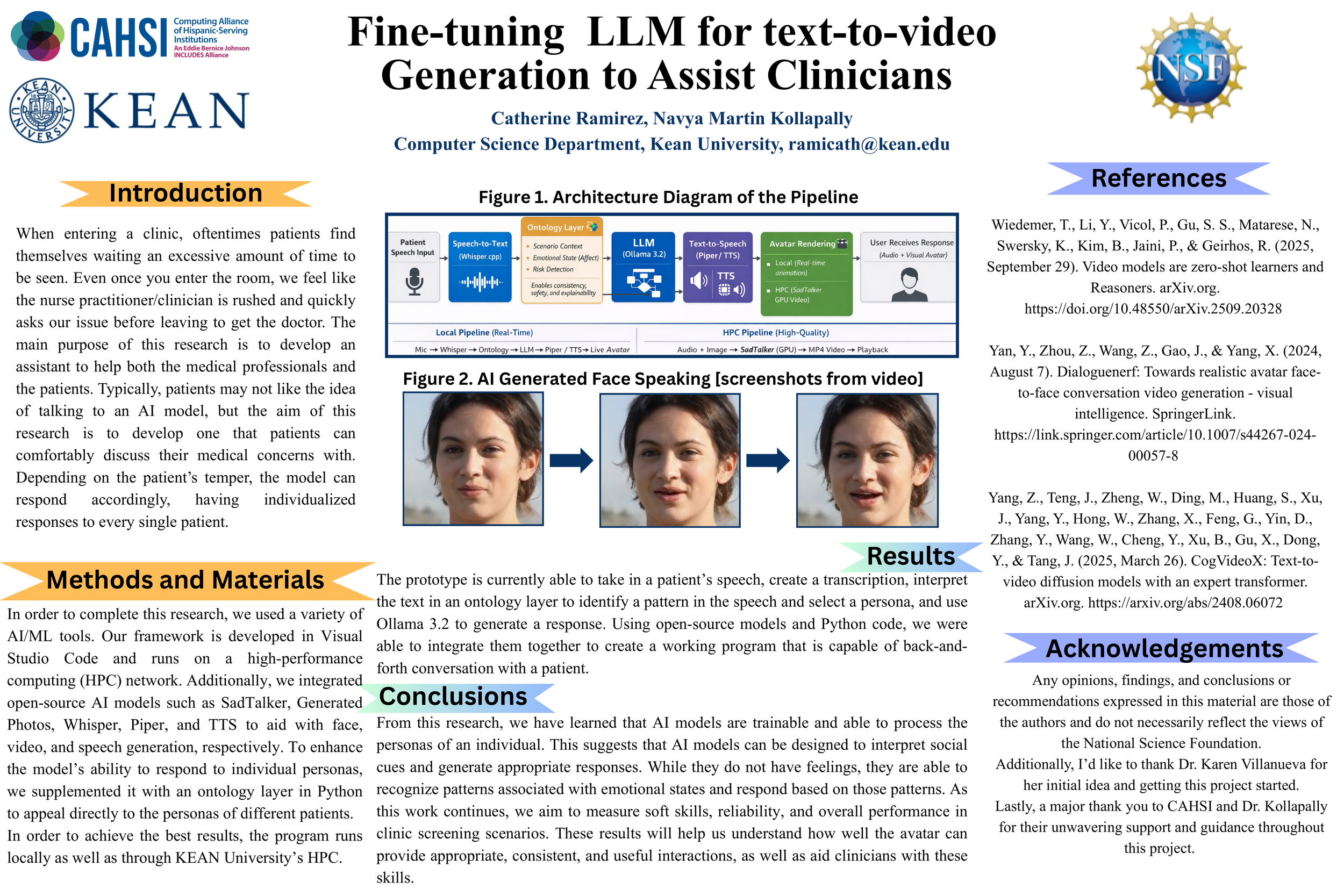

In recent years, we have seen the explosion of artificial intelligence (AI) and large language models (LLMs). We will explore how AI models can be integrated to create a realistic avatar for patient pre-screening in the Kean University clinic. Automatic speech recognition has significant limitations, such as a lack of emotional responsiveness and the inability to understand social cues. This research aims to mitigate these issues, resulting in an AI-based avatar that communicates with patients and produces thoughtful, curated responses. The model captures verbal data from the patient, processes it through an LLM, which then provides an intentionally generated response, tailored to meet the needs of the individual. Taking into account the features of the patient’s speech, including tone, pace, and conversational cues, the avatar will adjust its responses to appeal more to the patient. Furthermore, this study looks at how people's opinions and confidence in AI-based tools can change by having a personalized interactive AI avatar in a real-world setting. The ultimate goal is not to replace a healthcare worker but to have an AI assistant that patients can trust.