KUIPER TTS

Jamaria Hay

Co-Presenters: Jcunha Cunha

College: Hennings College of Science Mathematics and Technology

Major: BS.COMPUTER/SCI

Faculty Research Mentor: Yulia Kumar, Jenny Li, Dov Kruger

Abstract:

Title: Developing an Offline Text-to-Speech System Using Piper with AI

Students: Jamaria Hay, Jcunha Cunha

Professors: Yulia Kumar, Jenny Li (advisors), Dov Kruger (Rutgers ECE)

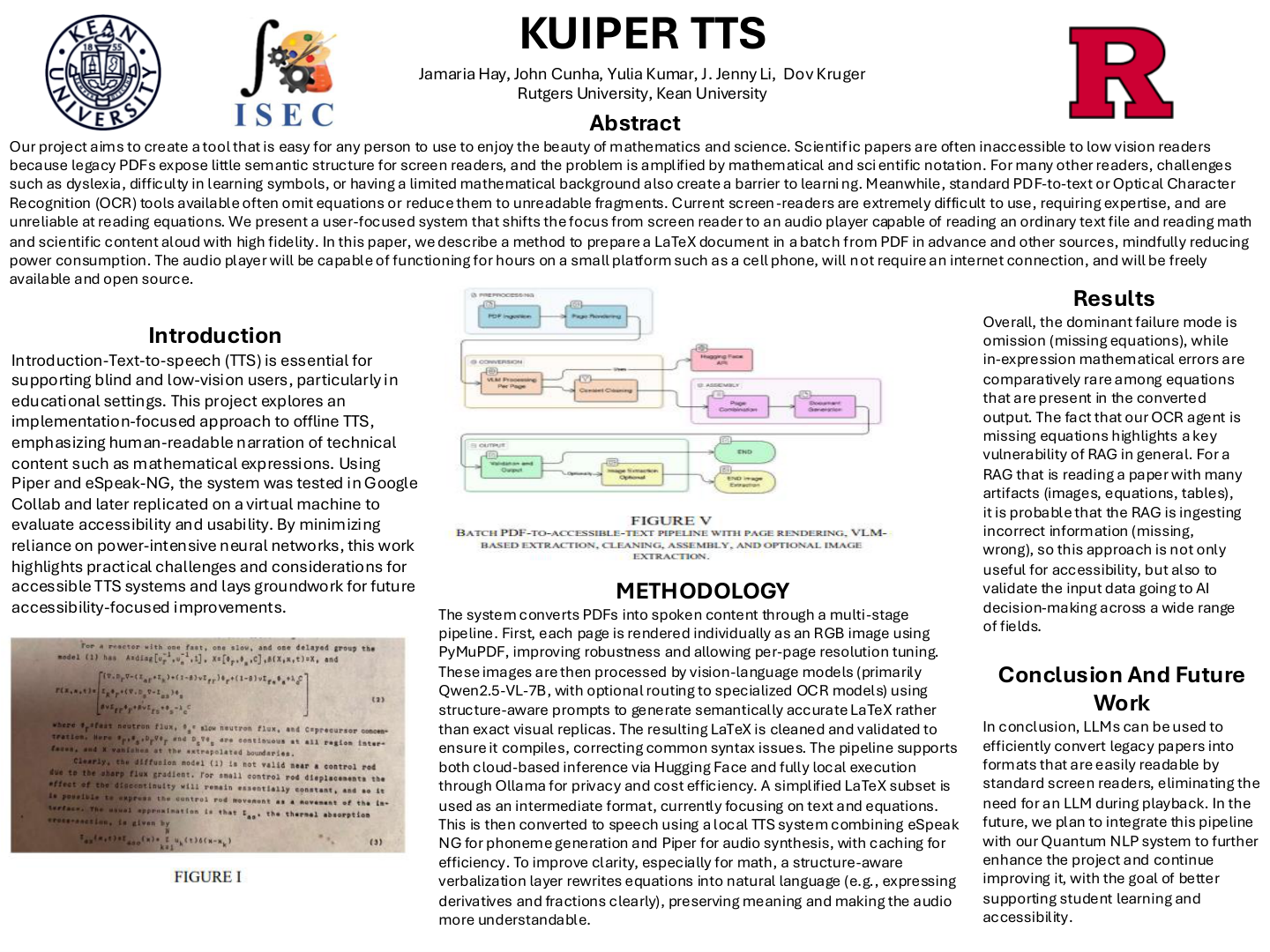

Text-to-speech technologies (TTS) are essential tools for improving accessibility for blind, low-vision, and hands-free users, including drivers. While many modern TTS systems rely on cloud-based neural networks, these approaches can introduce concerns related to power consumption, privacy, and deployment constraints. The objective of this internship research was to explore the development and implementation of an offline, energy-efficient text-to-speech engine using the open-source Piper framework, with an emphasis on accessibility and efficient system performance.

This project followed an implementation-focused approach conducted in collaboration with faculty researchers(listed above). Initial efforts involved attempting to install and configure Piper in a local computing environment, which revealed limitations related to system compatibility and dependencies. As a result, cloud-based and virtualized environments were adopted to support development. Piper was successfully installed and tested using Google Colab, where a dataset of .wav audio files provided by a faculty mentor was used to examine the model training workflow. The installation process was later replicated on a virtual machine using identical command-line procedures to evaluate portability, reproducibility, and accessibility to users.

The project was guided by a set of accessibility-focused goals, including enabling offline text-to-speech conversion, minimizing reliance on neural networks to reduce power usage, efficiently integrating espeak-ng, and supporting accurate pronunciation of heteronyms, names, and words with foreign language origins. Additional considerations included reading plain text and markdown files with minimal formatting to prioritize content clarity for blind and low-vision users.

This work contributes practical insight into the challenges and considerations involved in building privacy-conscious, accessible TTS systems. Future efforts may focus on completing model training, improving pronunciation accuracy, and evaluating system performance across real-world accessibility use cases.

Keywords:

Text-to-Speech, Accessibility, Piper, Speech Synthesis, Offline Computing