Solicitation Wizard: Retrieval Configuration Sensitivity in Multi- Document RAG Systems for Grant Requirement Extraction

Jose Marchena

Co-Presenters: Individual Presentation

College: Hennings College of Science Mathematics and Technology

Major: MS.COMPUTER/SCIENCE

Faculty Research Mentor: Yulia Kumar, Juan J

Abstract:

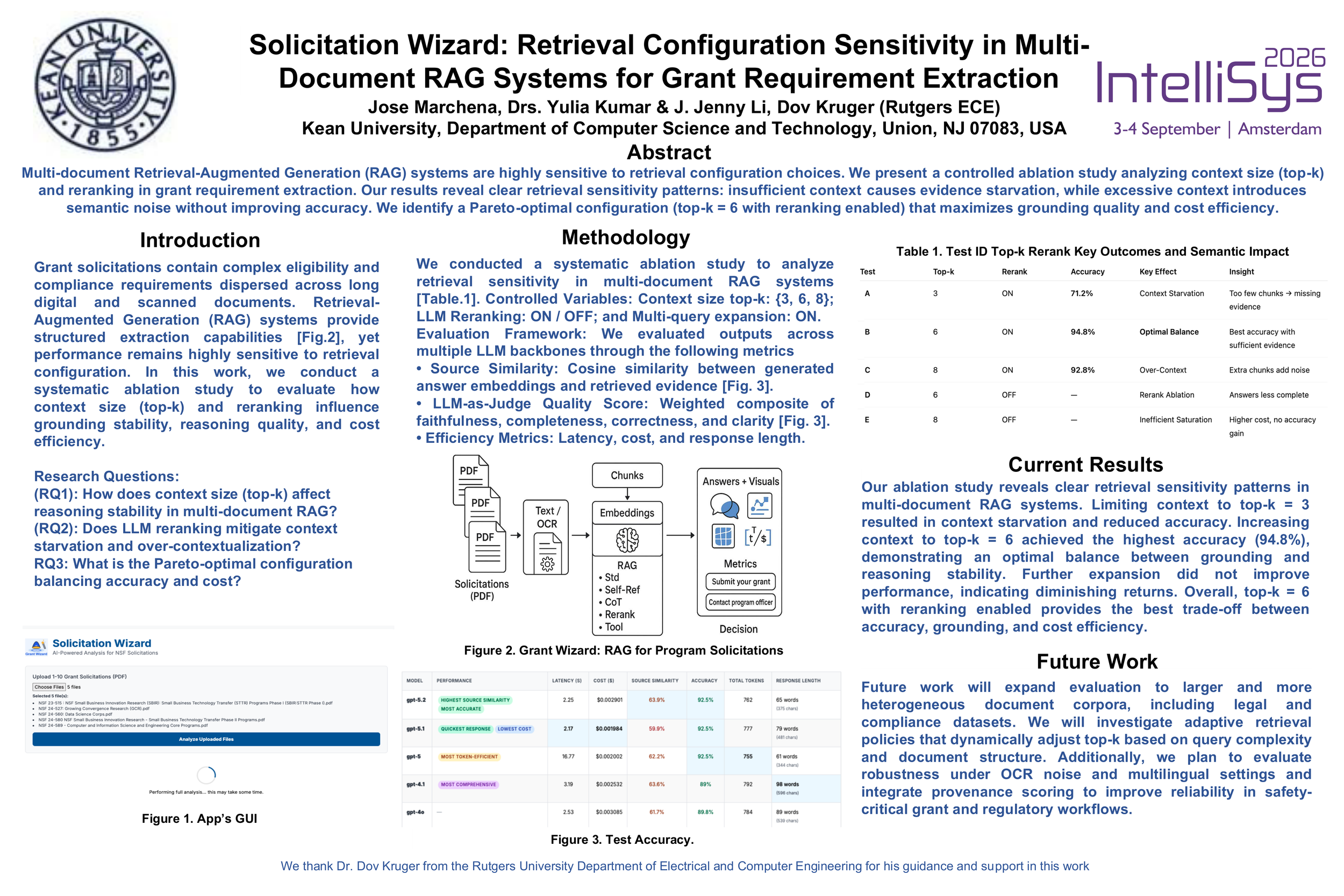

Researchers and administrators increasingly face long, heterogeneous solicitation documents in which deadlines, eligibility, and compliance requirements are scattered across dense text, complex layouts, and scanned pages. Conventional PDF readers and keyword search tools struggle with image-based documents and dispersed constraints, forcing time-consuming manual review that risks omissions. We present Solicitation Wizard (SW), an AI-powered, retrieval-augmented framework designed to extract, organize, and answer questions from digital and scanned solicitations with transparency and verifiable provenance.

SW integrates robust text extraction, dense semantic retrieval, and strategy-aware Large Language Model reasoning to deliver precise, context-sensitive answers to natural-language queries. The system supports self-critique, reranking, and tool-aware reasoning to improve factual completeness and reliability. Its architecture follows four coordinated stages: (1) ingestion routes native PDFs through PyMuPDF and applies OCR as a fallback for scanned or low-quality pages; (2) extracted text is split into overlapping passages, embedded with modern encoders, and indexed in FAISS for efficient similarity search; (3) multi-strategy Retrieval-Augmented Generation (RAG) reasoning applies five complementary strategie—Standard, Self-Reflective, Chain-of-Thought, Reranking, and Tool-Augmented RAG—to balance accuracy, interpretability, and cost; and (4) reporting and interaction provide summaries, Q&A, and checklists alongside interactive visual analytics.

SW includes three-dimensional PCA projections of passage embeddings, cosine-similarity heatmaps to expose redundancy and coverage, and dynamic word clouds to surface salient terminology. Side-by-side strategy comparisons allow users to inspect provenance, trace reasoning, and drill down to exact supporting passages.

We evaluated SW on current solicitations, archived calls, non-solicitation research papers, and scanned PDFs. On an 8.1MB contemporary solicitation, Standard RAG produced answers in 11.2 seconds at an estimated cost of $0.022. More advanced strategies improved factual completeness with moderate overhead (≈12.7–15.8 seconds, $0.024–$0.032). OCR-enabled scans-maintained responsiveness within single-digit seconds. Non-solicitation documents exhibited similar latency with proportionally lower costs.

SW advances document understanding by unifying OCR-aware ingestion, dense retrieval, multi-strategy reasoning, and user-visible evaluation for time-sensitive, high-stakes documents. By exposing provenance, latency, and cost, and producing actionable outputs such as summaries and compliance checklists, SW converts sprawling solicitations into timely, defensible guidance. The system is model-agnostic and extensible, offering a transferable template for transparent, domain-aware document analysis across institutions.